Buying AI compliance tools? Some fleets get safer. Others get burned. Here’s what to know before you sign anything.

For fleetsnew to AI, early success does not require a complete system overhaul, and the fastest wins come from areas where AI immediately removes ambiguity.

"Start where ambiguity is highest and effort is manual. If AI can clarify context, standardize documentation, and close the coaching loop inside current processes, the wins are immediate, and audit readiness improves by default," said Adam Kahn, chief marketing officer at Netradyne.

Those wins often include immediate reductions in safety risks at the edge, faster incident review, improved driver performance through positive reinforcement, and better audit readiness.

How Fleets Are Rethinking Compliance Management

Naeem Bari, co-founder and president of Linxup, described a clear evolution in how fleets manage compliance.

“Previously, you managed compliance for your fleets using reports as a guide,” he said. “You’d run reports, review them to figure out what to do, and then execute.”

Now, fleets are moving toward exception-based management.

“Instead of looking at everything, the system surfaces exceptions as they occur,” Bari said.

The next phase is insights-based management, where fleets act before problems occur rather than reacting after the fact.

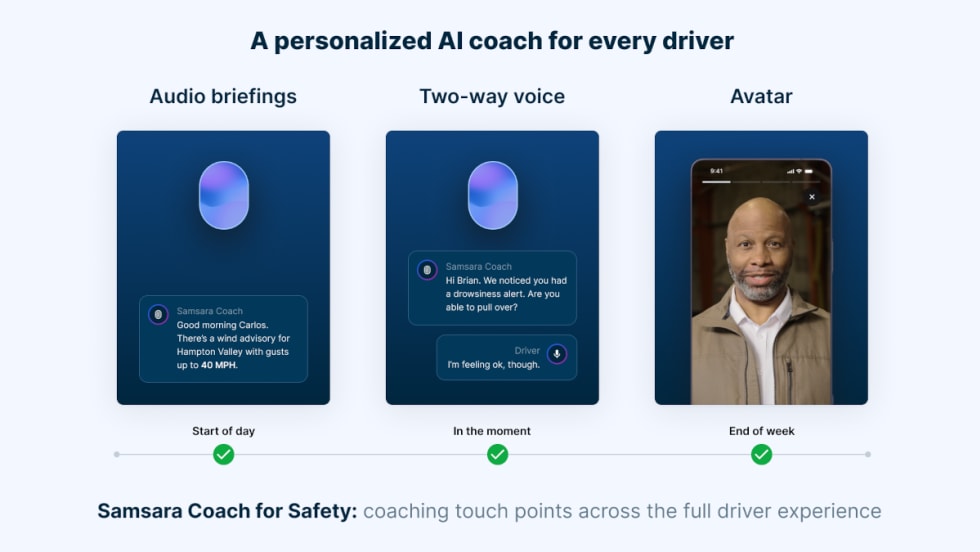

Automated coaching workflows play a central role. Fleets can focus on a small number of high-impact behaviors, surface events with AI, and document coaching in the same system. Over time, this improves safety and automatically generates compliance documentation.

Standardized reporting is another early benefit. AI can summarize events, coaching activity, and outcomes into monthly reports that make sense to leadership, insurers, and regulators.

Where Fleets Go Wrong with AI and Compliance

Many AI-related compliance failures stem from misuse, misunderstanding, or misplaced expectations.

“Treating AI as a punitive tool is a common mistake,” Kahn said. “AI should be positioned as a validation and coaching tool, not a replacement for human decision-making.”

Bari was blunt about what happens when fleets stop at alerts.

“If alerts are flagged and no one reviews them, coaches drivers, or documents corrective action, the long-term impact is limited,” he said. “In some cases, it can actually increase liability.”

Another common misconception is that installing AI tools automatically makes a fleet compliant.

“Regulators and insurers want to see evidence of consistent use,” Bari said. “They want documentation that shows issues were identified, addressed, and followed up on over time.”

Mark Schedler, senior editor at J. J. Keller and Associates, Inc., cautioned against overreliance on automation, especially in regulatory interpretation and privacy considerations.

“AI can sound authoritative when answering regulatory questions and still be wrong,” he said. “Verification remains critical.”

Eric Lambert, VP legal and employment counsel specializing in transportation and logistics at Trimble, emphasized that governance, not technology, is often the missing piece.

“Starting with the technology instead of a clear business problem is a frequent mistake,” he said. “AI works best when fleets treat it as part of a broader data and compliance strategy, not a standalone fix.”

Kahn also addressed the idea that AI replaces teams.

"Treat AI as decision-support with receipts: it moves teams from volume and velocity problems to judgment and governance, with explainable artifacts you can stand behind in audits," Kahn recommended.

Lambert pushed back on the perception that AI is only for large fleets.

“Smaller and mid-sized transportation companies can leverage AI for specific, high-value tasks,” he said. “The ROI from faster audits and reduced compliance penalties can make AI tools economically viable.”

Where Does AI Still Struggle for Fleets?

AI struggles with uncommon or nuanced scenarios, unusual site layouts, atypical weather patterns, and non-standard driving behavior. It excels at observable behavior but does not infer intent or interpret regulations.

“AI should be treated as a trusted companion riding shotgun,” Bari said. “Not a final decision-maker.”

Schedler noted challenges with exemptions, state-level regulations, and system integration. Lambert emphasized the “garbage in, garbage out” problem and the risks of unexplainable AI in audits.

The Smartest First Step Forward is Validation

Start with validation, not enforcement.

"Validation is the smartest first step. Pick a high-friction workflow, instrument it end-to-end, and let AI prove it can reduce workload and risk before you expand," Kahn added.

Bari encouraged fleets not to delay.

“Delaying adoption creates its own risk,” he said. “Modern compliance works best when fleets think in terms of a full loop: policy, monitoring, and follow-through.”

Schedler emphasized digitizing and centralizing records and using reputable ELDs listed on FMCSA’s registry.

Lambert advised fleets to treat AI as a data project, identify one high-value pain point, clean and harmonize data, prove ROI, and expand with governance in place.

What Questions Should Fleet Ask AI Vendors?

Across all responses, the guidance aligned. Fleets should ask:

Is this real AI, and what behaviors does it actually detect?

Where are AI decisions made, on the vehicle or in the cloud?

How is accuracy measured, validated, and monitored?

Can the system explain why it flagged an event?

Does it support policy alignment, monitoring, and documented action?

Is the ELD FMCSA-registered and compliant?

How does it handle exemptions and regulatory changes?

What security, privacy, and data usage protections exist?

Is fleet data used to train models?

As Bari summed it up, “Don’t be sold on technology. Be sold on a solution.”

Used well, AI strengthens compliance. Used poorly, it exposes gaps fleets may not know they have, which is why understanding why adoption is uneven (Part 1) and what AI can realistically solve today (Part 2) matters just as much as how fleets deploy it.